Introduction

Australian sound engineer and inventor Joe Hayes has been at the forefront of cutting-edge sonic technology for more than 30 years. He continues to impress engineers and musicians around the world with his A3D acoustic technology and its application in the low-cost, high quality Emergence AS8 speaker system. It is a system which advances the diffusion technology used in many concert auditoriums around the world by producing that diffusion at source in the speakers, rather than through expensive panels and room treatments. Here, Hayes explains the development of that system and recording engineers and experts Dr Toby Gifford, Ted Orr and Shane Fahey reveal how effective it is in the studio and in the field.

These experts’ views inform from the perspectives of professionals at the production stages through insights based on their adoption of the AD3 system into the workflow. Supporting their findings, SoundStage! Australia’s Editor-in-Chief Edgar Kramer provides further observations with a subjective listening appraisal of this unique technology.

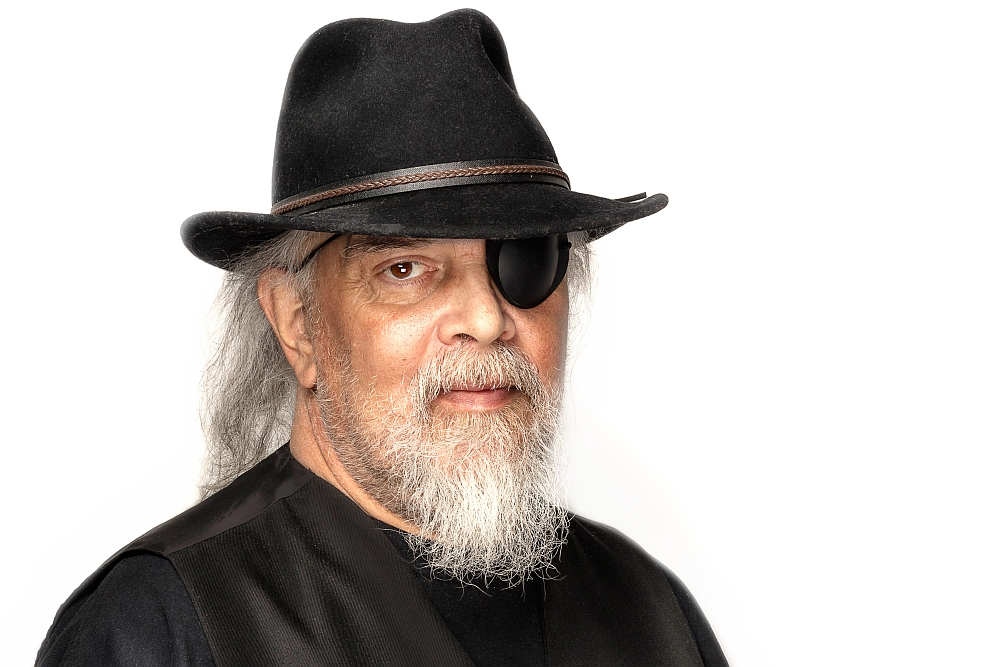

Joe Hayes, Inventor

About two years ago I was asked for a clear technical explanation of what A3D technology offered that was unique. To that point we knew how to fine tune a Quadratic Residue Diffusor to a spherical wavefront and reach a point of audio nirvana where suddenly the audio cues of an acoustic space jumped out of the image. But that in itself did not explain why this happened.

After considering just about everything available in the academic literature covering wavelets, seismic science DSP, diffusion, contemporary cochlea psycho-acoustics and neural biology, it was a Melbourne based academic Dr Toby Gifford who facilitated a breakthrough for us. We hired Toby to do a simple Matlab model of A3D and of normal piston radiators. The result was published at the AES Conference of Automotive Audio in 2017.

The model showed A3D emulates a point source: the sound-field radiates power uniformly in all directions. For anyone who understands the art they will know this is a good thing. But not necessarily unique to A3D. Then we noticed that an unusually ‘shimmering’ quality to the polar sound fields that act at a higher frequency multiple. The wild thing about all of this is with the A3D process you hear the room cues in the recording as though they are those of the listening space. It is like they are real acoustics.

Purple = 15,000Hz, Yellow = 9,000Hz, Red = 5,000Hz, Blue = 2,000Hz. Across the bottom is 8 cycles of 2,000Hz. Animation shows sound intensity patterns as the 2,000Hz sine wave moves through its cycles. It’s these patterns that change (at a speed of 4 x the frequency of the signal) that completely diffuse the listening pace eliminating specular reflections.

Purple = 15,000Hz, Yellow = 9,000Hz, Red = 5,000Hz, Blue = 2,000Hz. Across the bottom is 8 cycles of 2,000Hz. Animation shows sound intensity patterns as the 2,000Hz sine wave moves through its cycles. It’s these patterns that change (at a speed of 4 x the frequency of the signal) that completely diffuse the listening pace eliminating specular reflections.

Then recently Toby broke through, on this shimmering quality. Using his considerable math skills and understanding of time and space manifolds he realised the shimmering in the A3D wavefront is an Eikonal of the QRD itself!

But before Dr Gifford explains this in his own words, it’s important to mention one of the great contributors to A3D, loudspeaker engineer Brad Serhan who provided guidance in terms of the fine-tuning/voicing. I knew we needed someone who was prepared to take on technical challenges. In the movie, Apollo 13 Jim Lovell's mum is quoted as saying “... if they could make a washing machine fly, Jim could fly it!” Similarly, I say “… if you can make it vibrate, Brad Serhan could tune it to its maximum musicality!”

Brad was fearless in tuning the first A3D systems and, through that, we first noticed “another presence” in the sound field. Through his dedication to his craft, we were able to determine the tolerance we could work with. As a direct result, we now have the maturity of this new technology we need for licensing it.

Dr Toby Gifford, Research Fellow, SensiLab Monash University

A3D technology emulates a point source: the sound-field radiates power uniformly in all directions. This minimises sound-field distortion from self-interference that is typical of traditional membrane drivers. In particular it streamlines the air particle velocities along the direction of radiation, minimising off-axis (tangential) energy that is associated with non-uniform sound radiation. As a consequence spatial information encoded in stereo recording is maintained in pristine form by A3D, whereas traditional loudspeakers distort this information by confounding it with directionally confusing ‘eddies’ formed by self-interference. Thus, A3D enhances signal to noise ratio by allowing for physically larger drivers (with greater capacity to excite a sound-field) to behave like small point sources.

Additionally, an A3D sound-field has advantages over an 'ideal' point source. Both A3D and a point source radiate power uniformly in all directions, however an A3D sound-field is directionally phase auto-de-correlated. In other words, if f(a) is the phase of the soundfield on the surface of a sphere of fixed radius around the source, at a fixed time, as a function of the azimuth angle a, then f(a) is uncorrelated with any azimuthal rotations of itself. This is important because phase correlations give rise to phantom images when the sound-field is reflected from a hard flat surface (like a wall), which are interpreted by the brain as reverberance, and thus overlay spatial perception of the room on top of the spatial information encoded in the audio (and intended by the producer). Since a sound wave reflects from a flat surface according to the angle of incidence between a surface of constant phase (i.e. a wave-front) and the normal vector of the surface, de-correlating the soundfield phase means that no coherent reflections are formed.

Thus, the spatial signals communicated to the listener are just those encoded in the recording, without overlaying the particular (and often undesirable) geometry of the listening space.

Ted Orr, Head Engineer Sertso Studio

I live in the artist-rich ‘most famous small-town on Earth’ Woodstock, NY. I was drawn here by the Creative Music Studio (CMS), a school for avant-garde jazz and world music which was started by Ornette Coleman and now run by award winning vibraphonist/pianist/composer, Karl Berger.

During my time at CMS I developed many lasting relationships with some amazing musicians. And I was beginning to experiment with sampling, but found MIDI guitar controllers to be too slow. That’s when I was introduced to the Australian music manufacturer Passac and their MIDI guitar, the Sentient Six. I tried it out and was blown away because it actually worked unlike other models I had tried! Eventually, I was hired to be a product specialist and demo it at trade shows and music stores.

I was immediately impressed with the company and how open to suggestions they were. I met company president and founder Joe Hayes in 1984 at their San Jose offices. Joe always conveyed a sense that he could see issues from a completely different point of view than most and come up with solutions very quickly. I remember discussing a unity gain rack mount mixer for my guitar rack and he grabbed a piece of paper and started designing it right there and then, and it became the Passac Unity Eight. That unit became the centrepiece of many guitarists’ racks in the 90s including those of the custom rack designer of the stars, Bob Bradshaw.

As the 90s rolled in Joe was ready to move on from Passac. I had a chat with him about future endeavours, and we joked about him using fractal mathematics to come up with a “dereverberator”, a studio tool to remove excess room reflections. I heard from him again in about 1995, saying he had used fractal mathematics to come up with a revolutionary speaker design that could produce a “holographic” image, and would I be interested in delivering a pair to the renowned producer, Bill Laswell, who I knew from our shared alma mater, the Creative Music Studio.

I tried them out too. At the time I was working a lot at Applehead Studio in Woodstock, when it was at Michael Lang’s studio space, built for Joe Cocker. Studio owner Mike Birnbaum and I were amazed at once, from the enormity of the stereo sweet spot and the depth of image coming from these funny looking things. It was like the image could be heard in stereo from anywhere in the room, something none of the other box speakers could do at all. Joe had taken them to the Musikmesse in Germany and someone wrote them up as being “the greatest revolution in speaker technology since the cone”. Pretty damn impressive statement!

I reconnected with Joe a few years ago as he was gearing up for manufacturing of the AS8 design and had just done a demo of the new model at Princeton University. He generously asked if I would like to take those speakers rather than ship them all the way back to Australia. They arrived a few days later and I plugged them in at Karl Berger’s Sertso Studio, where I am the head engineer. Again I was amazed at the stereo image, which seemed to follow you no matter where you were positioned in the room.

Joe had remarked how they sound the same in a tiled bathroom as they do outdoors, in that their design eliminates the room reflections (which made me think of the ‘dereverberator’ we had talked about years before). This feature was of particular interest to me at Sertso, because the control room is small and bass frequencies tend to sound bigger than they are in reality because of the room shape and size. I began using them for mixing and mastering and was again immediately impressed with the results, which translated very well to all other playback systems.

Sertso Studio is primarily used for Karl’s personal jazz projects and our bread and butter comes from doing digital archives, for which we have so far received two Grammy Foundation grants for. Our initial archive was for the Creative Music Studio archive of 450 tapes recorded from 1973 to 1983. When the speakers arrived I was had just finished tracking a jazz sextet record for Karl. The bassist, Ken Filiano has very particular requirements sonically for his pizzicato and arco playing. Because of the bass issues with the room, these were “hit or miss” repairs and required going to speakers in other rooms to hear the ultimate effect of the changes being made. I could instantly hear with much greater clarity than ever before exactly what needed to be changed with regard to the mix. Ken came for an editing session and we basically flew through the alterations and every one sounded great, no matter what we played them back on.

In the few months I’ve had the speakers I have mastered five records (and went back and remastered a few of my own), as well as about 50 mixes, and now consider them to be one of the most valuable and useful tools in my audio chain.

When Joe asked if I knew of other prominent musicians I could connect him with, I said ‘absolutely!’ Musicians had to hear this. I’d been working (sometimes with my daughter Alana, the duchess of funk, on bass) for a decade with P-Funk offshoot band, 420 Funk Mob. I played them for the band leader, Michael Clip Payne. I met Clip as an engineer working on some of his projects, and have continued to engineer and play on almost all of his subsequent albums. He was very familiar with the way my studio sounded before getting these speakers and he too was immensely impressed by the sound once he heard them. I also contacted our sometime drummer, Zachary Alford (David Bowie, B52s, Bruce Springsteen), who was also tremendously impressed.

My son James is opening a multimedia facility for video, photography, 3D animation and music production, and these will certainly travel there with me on the sessions I will engineer for him.

Shane Faye, Sound Artist, Acoustician & Sound Engineer

In September 2015 I was invited to contribute to an art ecology project in Sydney, which was inspired by curators Susan Milne (Eramboo Artist Environment) and Katherine Roberts (Manly Art Gallery & Museum). The Ku-ring-gai pH Art Science project consisted of 10 artists and 10 scientists. There was a large cross-section of artists that worked across many media and likewise the scientists were from diverse backgrounds and disciplines. The gist of the project was that 10 pairs of artists and scientists were chosen by the curators to collaborate on an exploration of ‘natural’ processes occurring within the Ku-ring-gai Chase National Park. The collaborating pairs were left to formulate an area of study that would be ‘knocked up’ into an installation piece and be exhibited in Manly Art Gallery & Museum 15 months later in December 2016.

I worked with public artist/sculptor Greg Stonehouse. I was very interested to find some habitats on the west side of the park that offered suitable resources for avian biodiversity. As Greg and I began exploring these habitats I became aware of the possible evolutionary links between the acoustic propagation characteristics of different ecology profiles due to their landform, soil type and plant communities and the salient character of various bird species and their birdcalls. The following is a summary of how Greg and I approached the sound component of the project and the added incisiveness of listening with and learning from our field recordings using the Emergence AS8 stereo sound system. Simultaneous stereo field recordings using 3 x Zoom audio recorders were conducted between April and November 2016, in the western arm of Ku-ring-gai Chase National Park on Sydney’s northern sandstone basin rim.

We used Zoom H4n, H5 and H6 handheld audio recorders, mostly just with the cardioid built-in microphones in X-Y configuration. The recorders were spaced 50 to 100 metres apart for many of the recordings at Location 5. At other times we used a more complex single point recording set up using the Zoom built-ins, 2 x AKG 451 condenser microphones in either X-Y or ORTF configuration + Sennheiser MKH8020 omnidirectional mic and Rode NTG4+ shotgun mic.

Some recordings were made with the recorders spaced up to 500 metres apart in order to observe how various birdcalls excited the ‘global acoustic space’ of the upper valley. It became evident on mixing these recordings that there are also closer intermediate ‘acoustic spaces’ or sound fields that are governed more by the power and specific orientation of calls, which propagate longitudinally across the valley profile. Then there are localized sound events that are close to a specific recorder with minimal acoustic reflections within its particular locale. Sometimes the sound sources are quite distant, but due to their radical off-axis relationship, to the other recorders, their signals are only being picked up by the one recorder.

Now, when I say we are mixing the environmental recordings, it is not like mixing music tracks or making ‘soundscapes’, be they electronic, instrumental or organic based. The process, in this case, is a lot less interventionist and more about ‘reaching into’ the recorded domain to unloosen and pull out threads and shifting sequences of sound segments from a complex weave of multiple overlapping recorded ‘acoustic spaces’. The classic sound stage of ‘true acoustic’ music performance spaces is not the most relevant paradigm when recording in complex outdoor (non-human) environments. However, when condensing the resultant mix into a listenable stereo or surround sound product, the sound stage model is helpful to balance and tie it all together. The major difference in the recording process is that there is no one dominant enclosed and hence ‘coloured’ acoustic space. There are multiple shades of partial enclosure, ‘aberrant’ reflection patterns and very distinct echoes; not to mention volatile atmospheric conditions and surging ambient levels due to transportation sound.

After applying a global EQ and Limiter with 3dBm Input Gain, to the Master Stereo Out track we can begin to assess what is happening in each of the recorded tracks from the three recorders. There was a tendency for most of the recordings at Ku-ring-gai Chase National Park to be on the soft side when using the Zooms’ built-in mics and pre- amplifiers. The global EQ is usually between + & – 2.5dBm for the various pertinent centre frequency bands chosen.

The AS8 sound system is very responsive to mild limiting and EQ boosts. Applying such limiting to an environmental recording perceptually enhances the width of the stereo image furnished through the AS8’s reflection grating process, plus it has the unusual quality of subtending an arc through the ‘focal centre’ of the image to its left and right extremities. This effectively enhances the ‘depth of field’ of the image. When accompanied by a strategic EQ boost near the fundamental tonal frequency of a dominant harmonic series of the sound source, the presence of the targeted sound source is ‘enlivened’ via perceptual irradiation in the mid to high frequency band region. It was ‘normal’ to apply a 2.5dBm boost at around 80Hz for almost every recording to give a more realistic rendition of the low frequency content in the transportation sound that impacts on the Hungry Track environment. Transport sounds that contribute to the overall Leq (dBA) – continuous energy level of the environment are aircraft flyovers (jet and propeller planes), helicopters and traffic sounds along the ridge roads. Sea craft around the western side of Pittwater generally have a lot less impact than aircraft and vehicular traffic noise on the prevailing ambient conditions.

One of the challenges with the audio production of environmental recordings on primarily non-human habitats is how to gauge the ‘breath of the prevailing atmos’. High gain recordings of quiet environments will result in a continuous broadband noise, which establishes a ‘sound horizon’. This is an original “soundscape” descriptor, which acknowledges the degree of limitation to human auditory acuity, when an auditor is physically moving through, into and out of shifting sound fields within the physical/’concrete’ environment. The ‘atmos breath’ is determined by air movement or currents; breeze in the trees i.e., leaf rustle which is more pungent for scherophyll forest (scherophyll means hard leaf); background ambience of transportation density and movements; air humidity; air and land temperature; wind direction and time of the working week and the noise floor of the audio recording chain.

The AS8 sound system is very effective in scanning the ‘atmos’ ambience of a recording from 100Hz to 900Hz with a parametric EQ filter set at + or – 6dBm and Q between 1.8 & 3.0. Sometimes a boost of up to 1.5dBm between 100Hz and 160Hz or a dip of 1.5dBm around 450Hz can help to balance the foundational background of the recording so that the narrative explicated by the active sonic actors can unfold more seamlessly. Scanning the recording of recorder 2 with a parametric EQ filter with a Q of 1.8, I liked the effect of a slight boost at 260Hz. It enhanced the depth of field of the recording and had the effect of spatially differentiating the virtual localization of some of the birdcalls captured simultaneously by recorders 1 and 2. They now no longer all seem to be at the same distance from the focal listening centre of the stereo image. A boost at 1060Hz enhanced the clarity of the birdcalls and also spatially differentiated their virtual position in the stereo image. Two or three different birds of the same species can be clearly identified as calling from well defined positions within the environment.

This is what Joe Hayes, the inventor of the AS8 sound system, calls a higher resolution of “spatial laminations”. The spatial proximities of the sound sources are less contained within apparent concentric rings. There is much more connectivity between all the varying degrees of proximity. Their respective distances are not perceived as group transitions from a reference point but are each uniquely relational to each other as well as to a reference point.

In effect, the AS8 achieves the requisite spatial resolution not just of sound sources and their sound events but of polymorphous acoustic spaces that simultaneously overlay with each other, within complex outdoors acoustic environments.

Edgar Kramer, SoundStage! Australia Editor-in-Chief – Listening Impressions

As alluded to by the artisans above, the soundfield these diminutive transducers can reproduce is quite astonishing, both in terms of its all-round enveloping and its verisimilitude to the sound cues of the environments being replayed. There’s a massive spread in all directions without sacrificing image specificity or the indications of what’s been captured in the recording. Any of my staple skilfully-produced live recordings such as Harry Belafonte’s, Tommy Emmanuel’s, Ryan Adams’ and The Weavers’ were absolutely magnificent in their replication of the live events and the venues’ inherent acoustics.

While the subwoofer is only a small driver in a small box, there’s enough mid-bass punch to satisfy most small to medium-sized room contexts. I actually tried the satellite speakers both on sturdy stands and a credenza on either side of a large TV display. In both settings, the speakers pulled-off their disappearing magic trick. I preferred the credenza placement, in the end, due to a small increase in midrange solidity and body that carried down to the upper bass, with those frequencies sounding somewhat fuller, less midrange-forward. An added benefit of that particular placement was the ability to incorporate the AS8 into an AV context where movie soundtracks and concert videos were brought to life… even if the laws of physics denied the last couple of bass octaves. Also of benefit is the subwoofer module’s Bluetooth connectivity option which allows smart device content streaming.

Almost literally, it’s a mirage the way the AS8 system can produce not just an amazingly wide, deep and tall soundscape, but also in the way it retains surprisingly tight image placement as you move around the room. In off-axis positions where conventional-design speakers will give you a single channel flat-pack sound, the AS8 system preserves a large portion of the soundstage you get at the optimum ‘sweet spot’.

While there’s solid science and mathematics in the design of the lens above the main driver, as far as this writer is concerned, the proof is always in the pudding. And for these ears, the pudding exhibits plenty of ‘proof’. Even if it effectively does also diffuse, this is no simple ‘diffusor’, the likes of which we’ve seen from several loudspeaker companies which use drivers firing into a cone or sphere. In addition to that task, it is achieving superior outcomes via further strategies. The lens’ slat formations, the structure’s precise angle, the mix of materials chosen, the specific drivers used, the nature and execution of the crossovers and more, are all the products of specific calculations and decisions that have impacted on the subjective association of a given recording’s ambience with spatial naturalness. And it’s that “naturalness” and openness that has been embedded in our psyche for thousands of years. With the AS8, a natural reproduction of ambience may finally be virtually replicated.

AD3 Emergence AS8 Holographic Loudspeaker System

Australian & Global Distributor: New Audio US

+1 347 790 0772

www.newaudious.com

NOTE: A3D Technology can be licenced to speaker manufacturers through Vastigo Ltd, a company based in Singapore.

AD3 Licencing Company: Vastigo Ltd

www.vastigo.com